Understanding SAM Forecasting Results

Overview

SAM provides comprehensive forecasting outputs designed to support both technical analysis and business decision-making. This guide explains how to interpret all 25+ metrics and use them effectively for strategic planning.

Primary Outputs

1. Forecast Data (CSV Export)

Professional CSV output with forecast data, accuracy metrics, and performance indicators for business analysis

Professional CSV output with forecast data, accuracy metrics, and performance indicators for business analysis

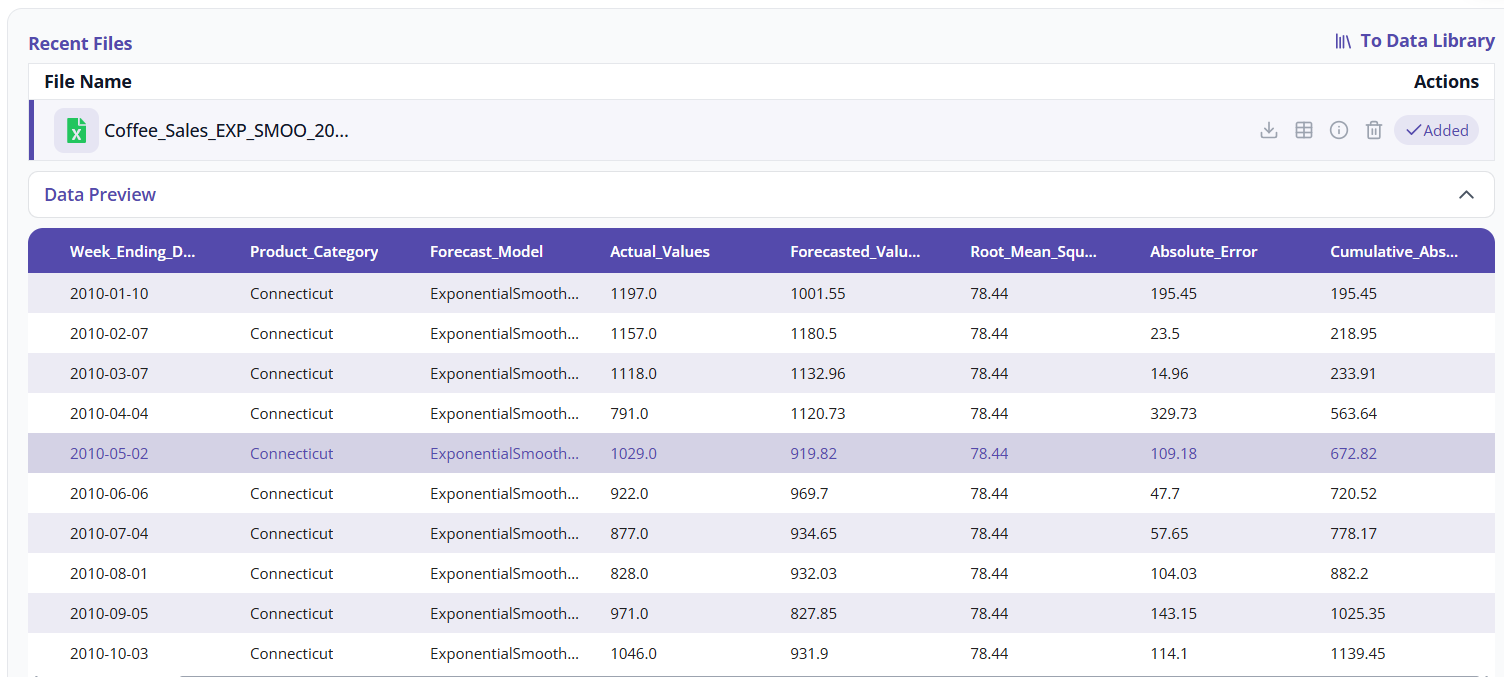

Standardized 9-Column Format:

Week | Week_Ending_Date | Product_Category | Forecast_Model |

Actual_Values | Forecasted_Values | Root_Mean_Square_Error |

Absolute_Error | Cumulative_Absolute_Error

Key Features:

- Historical Fit: Shows how well models captured past patterns

- Validation Period: Out-of-sample accuracy assessment

- Future Forecasts: Predictions for your specified horizon

- Multiple Models: Compare performance across different algorithms

- Category Breakdown: Separate forecasts for each product/region/segment

2. Visual Analytics (Interactive Charts)

Chart Components:

- Actual vs Predicted Lines: Visual accuracy assessment

- Error Bands: Uncertainty visualization with confidence intervals

- Trend Indicators: Growth direction and magnitude

- Seasonal Patterns: Cyclical behavior identification

- Model Comparisons: Side-by-side performance visualization

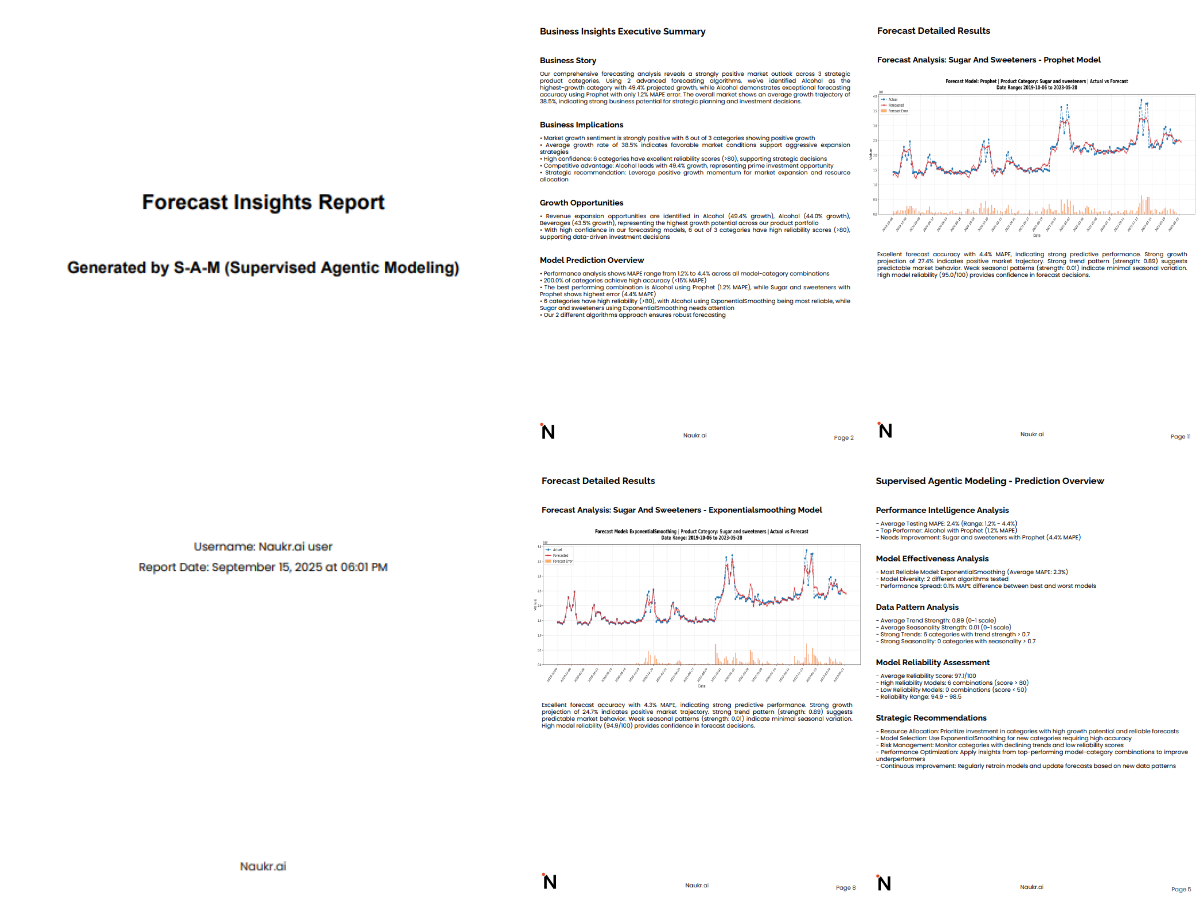

3. Executive Summary (PDF Report)

Complete executive PDF report with model performance, visual analytics, business insights, and strategic recommendations

Complete executive PDF report with model performance, visual analytics, business insights, and strategic recommendations

Multi-Page Professional Report:

- Title Page: Project overview and generation date

- Performance Summary: Model rankings and recommendations

- Visual Forecasts: All charts included with captions

- Business Insights: Key findings and strategic implications

- Technical Glossary: Metric definitions and interpretations

Understanding Accuracy Metrics

Primary Accuracy Indicators

RMSE (Root Mean Square Error)

What it measures: Overall prediction accuracy in original units

- Excellent: < 5% of data mean

- Good: 5-15% of data mean

- Fair: 15-30% of data mean

- Poor: > 30% of data mean

Business Interpretation:

Example: Sales RMSE = 1,200 units

• If average sales = 10,000 units → 12% error (Good)

• If average sales = 50,000 units → 2.4% error (Excellent)

MAPE (Mean Absolute Percentage Error)

What it measures: Average percentage error across all predictions

- Excellent: < 5%

- Good: 5-10%

- Fair: 10-20%

- Poor: > 20%

Business Interpretation:

MAPE = 8.5% means:

• Forecasts are typically within 8.5% of actual values

• For $100K revenue forecast, expect ±$8.5K accuracy

• Suitable for budgeting and planning purposes

Simplified Quality Ratings

Accuracy Assessment

Our AI automatically grades model performance:

- Excellent (MAPE < 5%): High confidence for strategic decisions

- Good (MAPE 5-10%): Reliable for operational planning

- Fair (MAPE 10-20%): Useful for directional guidance

- Poor (MAPE > 20%): Consider additional data or different approach

Confidence Levels

Risk assessment for forecast reliability:

- High: Low variability, consistent patterns, strong model fit

- Medium: Moderate uncertainty, acceptable for most planning

- Low: High variability, use with caution, consider ranges

Business Intelligence Metrics

Growth and Trend Analysis

Growth Rate Percentage

Calculation: (Forecast Mean - Historical Mean) / Historical Mean × 100 Business Use:

- Positive Growth: Expansion planning, resource allocation

- Negative Growth: Cost management, efficiency improvements

- Stable Growth: Maintenance mode, operational optimization

Forecast Trend Direction

- Increasing: Upward trajectory, growth opportunities

- Decreasing: Declining pattern, intervention needed

- Stable: Consistent performance, predictable planning

Historical vs Forecast Values

Compare past performance with future projections:

Historical Mean: 45,000 units/week

Forecast Mean: 52,000 units/week

Growth Rate: +15.6% (Strong growth expected)

SPYA Analysis (Same Period Year Ago)

SPYA Absolute Change

What it measures: Total difference between forecasted and same period last year Business Value: Seasonal comparison for business cycles

Example: Q4 forecast vs Q4 last year

SPYA Absolute Change: +125,000 units

Indicates stronger Q4 performance expected

SPYA Percentage Change

What it measures: Percentage growth vs same period last year Strategic Insights:

- Positive: Year-over-year growth

- Negative: Year-over-year decline

- Seasonal: Expected for cyclical businesses

Advanced Quality Metrics

Reliability and Confidence

Model Reliability Score (0-100)

Calculation: Accuracy-adjusted confidence measure

- 90-100: Extremely reliable, suitable for critical decisions

- 70-89: Good reliability, appropriate for most planning

- 50-69: Moderate reliability, use with additional validation

- < 50: Low reliability, consider alternative approaches

Forecast Stability Score

What it measures: Consistency of predictions across forecast horizon

- High Stability: Smooth, predictable forecasts

- Low Stability: Volatile predictions, higher uncertainty

- Business Impact: Planning complexity and risk assessment

Error Coefficient of Variation

Technical Measure: Standard deviation of errors / mean of actuals Business Interpretation:

- < 0.05: Very consistent performance

- 0.05-0.10: Acceptable variability

- > 0.10: High variability, consider forecast ranges

Data Quality Indicators

Trend Strength

Scale: 0-1, where higher values indicate stronger trends

- > 0.7: Strong trend, reliable for extrapolation

- 0.3-0.7: Moderate trend, good for medium-term planning

- < 0.3: Weak trend, focus on short-term forecasts

Seasonality Strength

Scale: 0-1, where higher values indicate stronger seasonal patterns

- > 0.7: Strong seasonality, plan for seasonal variations

- 0.3-0.7: Moderate seasonality, consider seasonal factors

- < 0.3: Weak seasonality, focus on trend and level

Model Performance Comparison

Model Rankings Table

Our executive summary includes a comprehensive comparison:

| Model | Accuracy Grade | MAPE | Reliability Score | Best Use Case |

|---|---|---|---|---|

| Prophet | Excellent | 4.2% | 94 | Strategic Planning |

| SARIMA | Good | 8.1% | 87 | Operational Forecasting |

| N-HiTS | Excellent | 3.8% | 96 | High-Stakes Decisions |

Recommendation Engine

Best Model Selection: Our AI recommends the optimal model based on:

- Accuracy Performance: Out-of-sample validation results

- Business Context: Forecast horizon and use case requirements

- Data Characteristics: Trend, seasonality, and quality factors

- Computational Efficiency: Processing time and resource requirements

Risk Assessment Framework

High Confidence Scenarios (Use forecasts directly)

- Accuracy Grade: Excellent

- Confidence Level: High

- MAPE < 5%

- Reliability Score > 90

Medium Confidence Scenarios (Use ranges)

- Accuracy Grade: Good/Fair

- Confidence Level: Medium

- Consider forecast ± error bounds

- Develop contingency plans

Low Confidence Scenarios (Directional guidance only)

- Accuracy Grade: Fair/Poor

- Confidence Level: Low

- Focus on trend direction

- Frequent re-forecasting recommended

AI-Generated Insights

Executive Summaries

What you get: Business-focused analysis for each forecast including:

- Performance assessment in business terms

- Key trends and growth opportunities

- Comparison to previous periods

- Strategic implications

Example:

"Product A shows 18% YoY growth with high reliability (87%). Clear seasonal patterns indicate March peak demand. Significant acceleration from Q4's 8% growth suggests successful market strategies requiring capacity validation."

Actionable Recommendations

Categories:

- Inventory Management: Stock level recommendations

- Marketing Strategy: Timing and targeting suggestions

- Capacity Planning: Resource allocation guidance

- Risk Management: Issue mitigation strategies

Interpreting Forecast Charts

Visual Elements

- Blue Line (Actual): Historical performance data

- Red Line (Forecast): Model predictions

- Orange Shading: Absolute error magnitude

- Confidence Bands: Upper and lower prediction bounds

Pattern Recognition

- Seasonal Peaks: Regular high/low cycles

- Trend Lines: Overall growth or decline direction

- Volatility: Consistency vs variability in patterns

- Break Points: Significant pattern changes

Business Insights

- Peak Planning: Prepare for seasonal demand spikes

- Trough Management: Optimize during low-demand periods

- Growth Trajectory: Long-term expansion or contraction

- Pattern Changes: Market shifts or business evolution

Common Pitfalls to Avoid

1. Over-Relying on Low Confidence Forecasts

- Problem: Major decisions on reliability scores under 70%

- Solution: Use for directional guidance only

2. Ignoring Seasonal Patterns

- Problem: Not accounting for seasonality

- Solution: Review seasonality strength, adjust plans

3. Misinterpreting Confidence Intervals

- Problem: Treating ranges as exact predictions

- Solution: Use for scenario planning

4. Not Validating Against Business Context

- Problem: Accepting forecasts misaligned with business changes

- Solution: Validate AI insights against business knowledge